Proof of Some Bizarre Effect in the CNN Survey

As a survey trainer, I find people are skeptical about the impact of biases upon a survey’s results.

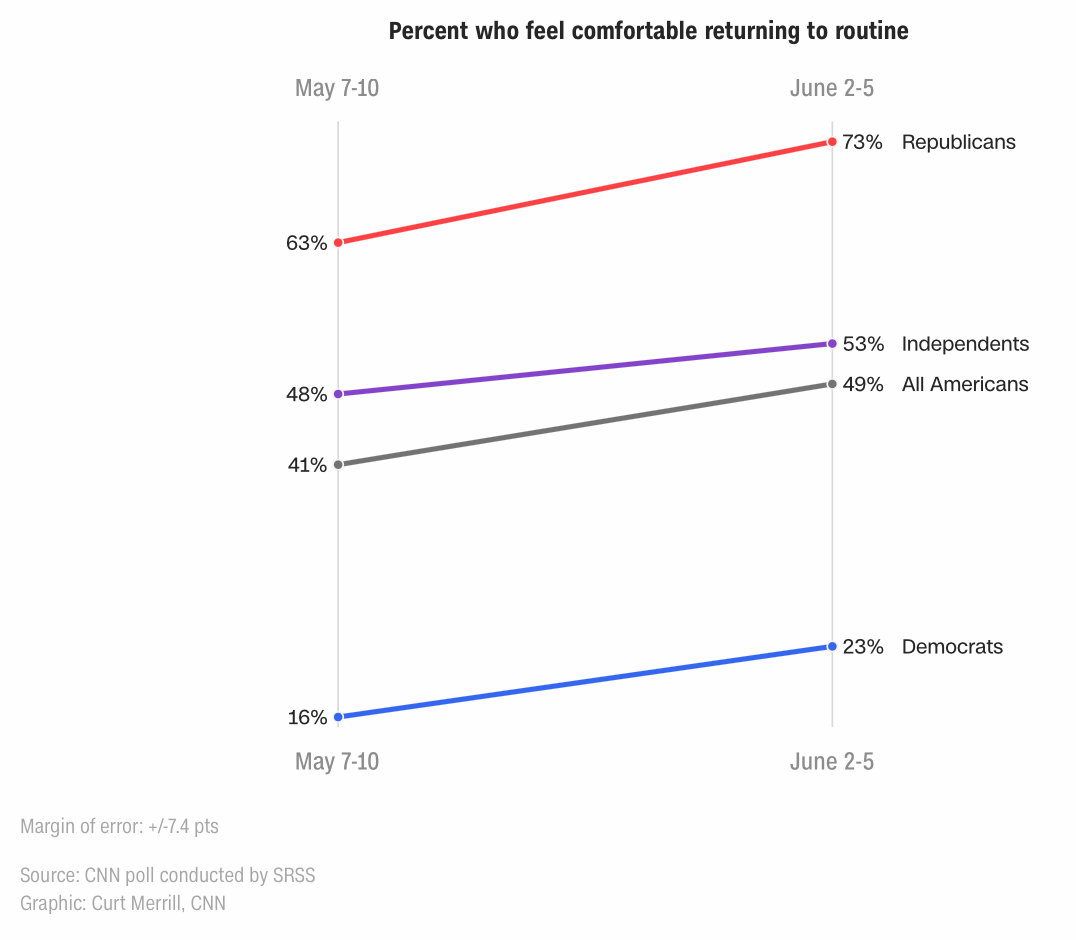

This poll provides indisputable proof of some bias distorting the data. The pollsters provides some detail about the polling methodology, and they report the sampling error – which is driven by the number of responses. But they provide no discussion about sample bias or conformity bias.

A moment’s reflection upon their findings shows something is in play.

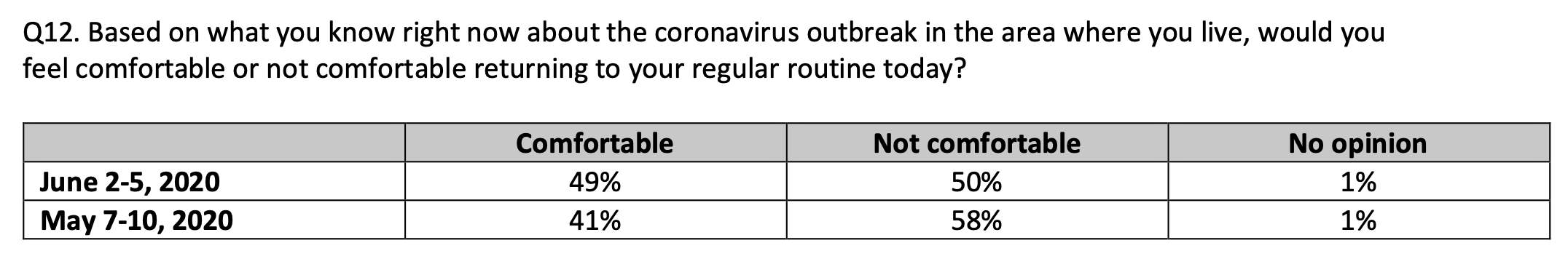

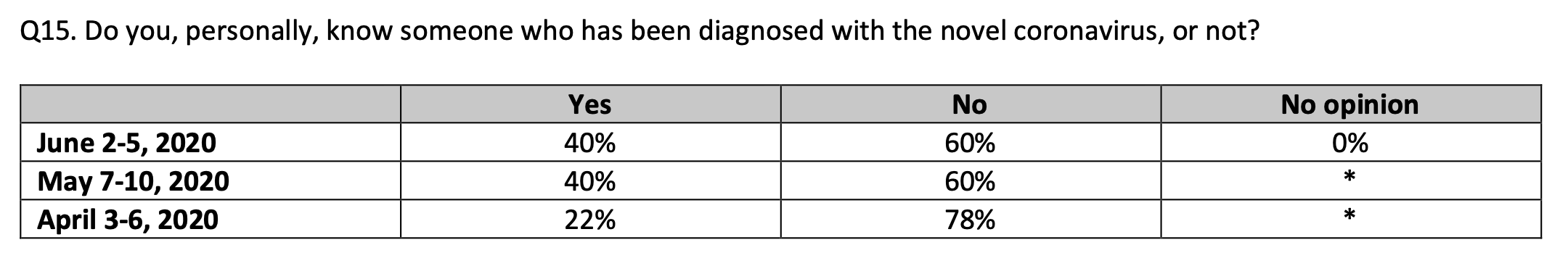

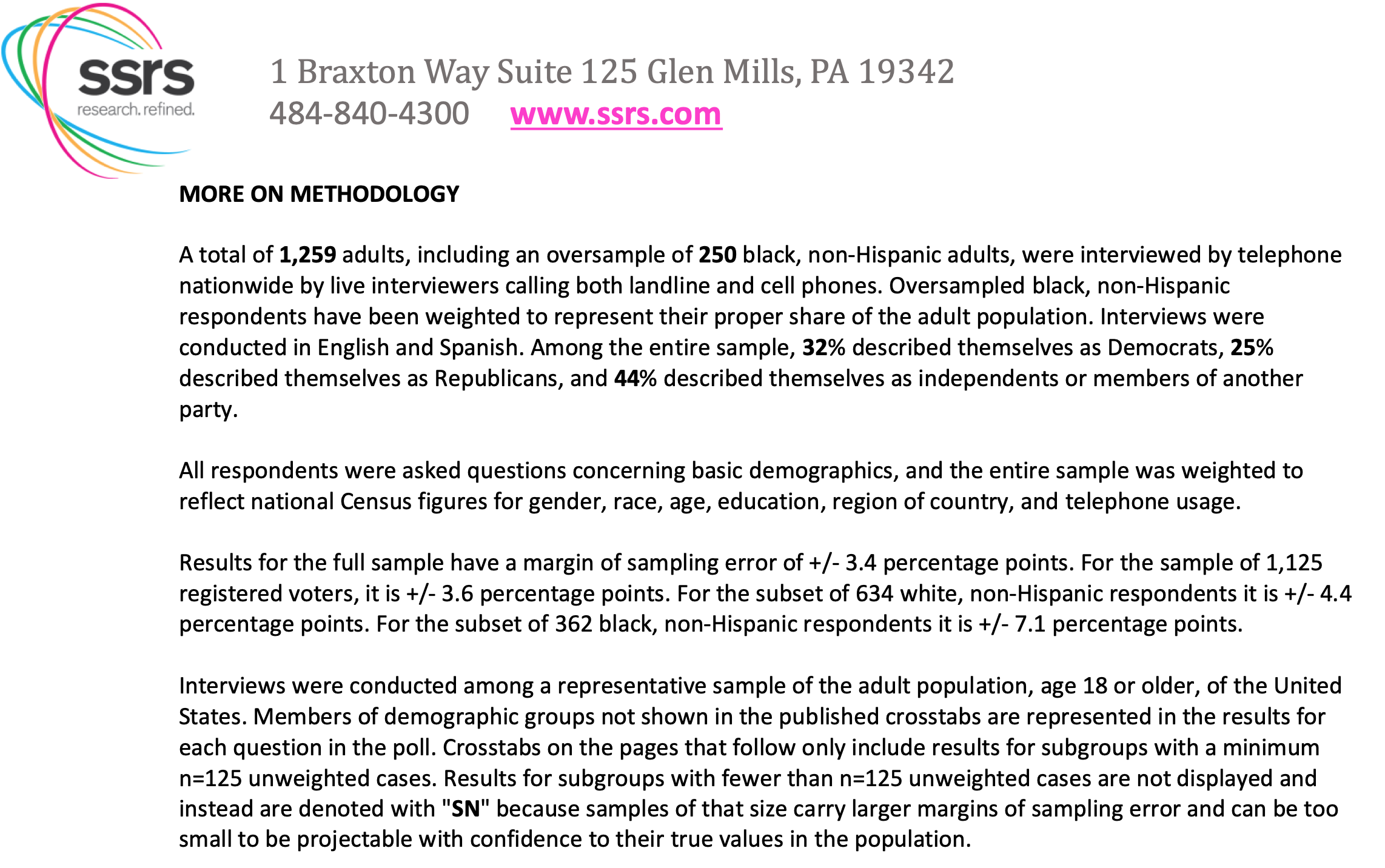

Look at the nearby results taken from CNN’s detail report for Question 15, which asks if “you, personally, know someone who has been diagnosed with the novel coronavirus.”

CNN Poll: % Know Someone Covid Positive

The number of positive cases in the US increased by 263% from April 6 to May 10 and by 43% from May 10 to June 5. The percent saying they knew a Covid-19 positive person almost doubled from April to May.

Yet the percent of people knowing someone who tested positive stayed flat from May to June. How is that possible?

The percentage clearly should have tracked up! (Going down would be truly odd.) And as the number of positive cases increases, the probability of anyone knowing a positive person increases exponentially. (The process would parallel the logic of the virus spread.) It is highly unlikely that this can be explained only by sampling error.

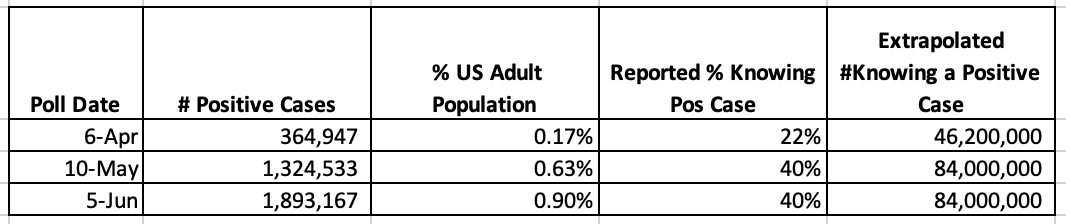

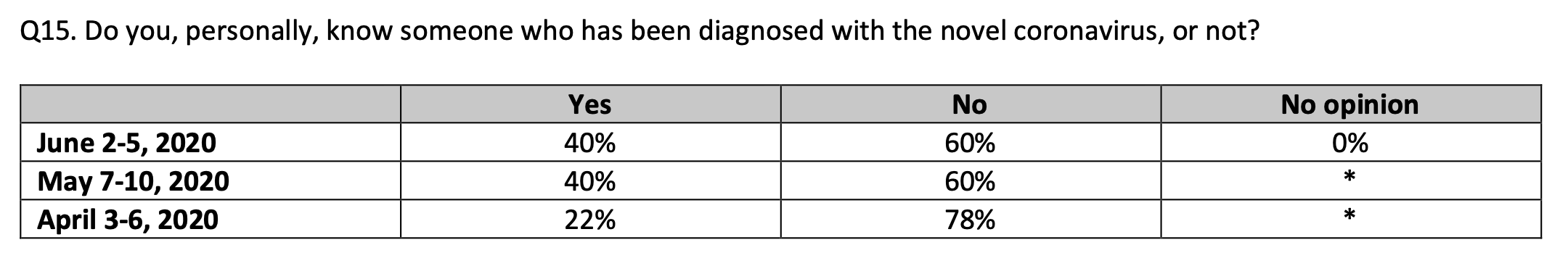

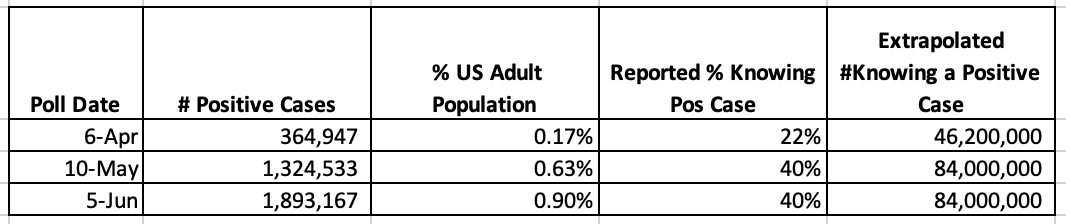

Further, look at the nearby table.

- For the April 6 polling, 22% of people reported knowing at least one of the 365,000 people who had tested positive, which is roughly 0.17% of the US population.

- For the May 10 polling, 40% of people reported knowing at least one of the 1,325,000 people who had tested positive, which is roughly 0.63% of the US population.

- For the June 5 polling, 40% of people reported knowing at least one of the 1,893,000 people who had tested positive, which is also roughly 0.90% of the US population.

Count Knowing Covid Positive Person

If the polling data are accurate, one helluva lot of people knew each person who was positive with Covid-19. Extrapolating to the US adult population for the June 5 polling, each Covid-19 positive person was known by 44 people on average. (For May it would be 63 people, and for April, 127 people.)

Even though the question emphasizes “personally” knowing someone with Covid-19, the numbers just don’t make sense.