Confounding Factors

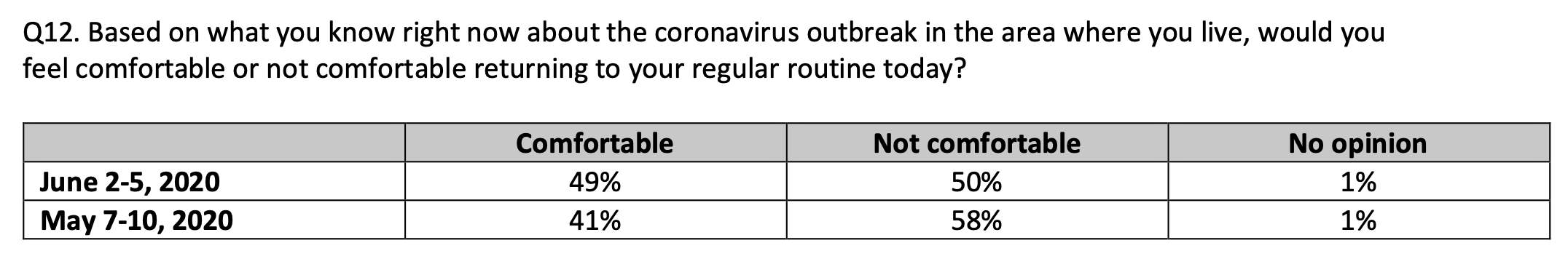

Notice that this poll question asks about returning to a regular routine based upon the coronavirus outbreak. But Covid-19 isn’t happening in a vacuum. While the virus has dominated –- overwhelmed — the news for months, in late May just before the June polling was conducted a new event pushed Covid-19 to the side: protests and riots after the George Floyd killing.

Virtually every major city, along with many midsize ones, had rioting and looting – separate from the peaceful protests. Hundreds of businesses were destroyed, just when they were preparing to reopen as the pandemic impact waned. If you lived in a city, which I don’t, I would imagine that your attitude toward returning to a regular routine would be more negative after this unrest. The “new normal” has been supplanted by the “new new normal.”

As stated, democrats populate cities, so the impact of the urban unrest would be more pronounced for that political group. Again, the political party may be a proxy for the civil unrest impact on comfort levels for returning to regular routines.

While this new discomfort is not due to the coronavirus, it would be very hard to get respondents to separate that impact from the virus impact. And the pollsters didn’t ask a question about the unrest that could be used to identify the relative impact.

Where’s the intellectual, scientific curiosity?

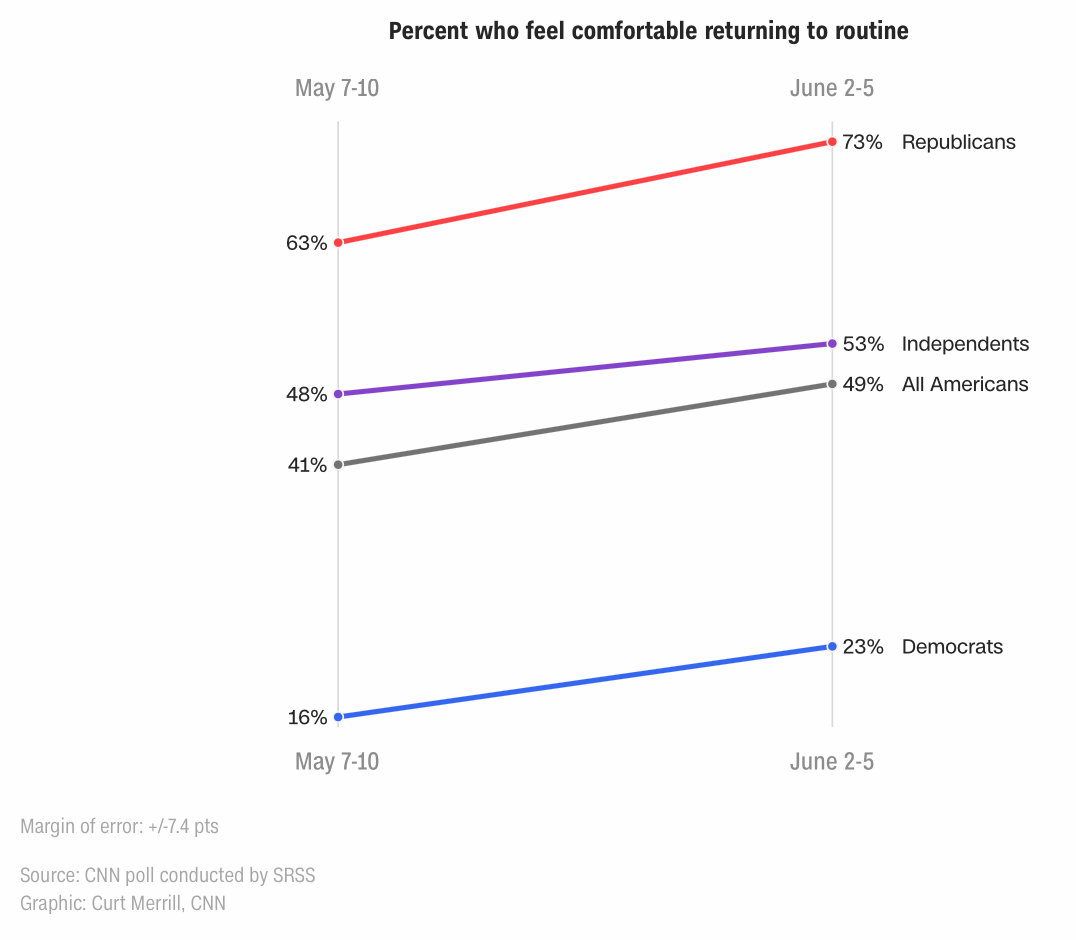

The confounding factor of the urban unrest may explain why the increase in comfort level for democrats was less than for republicans.