BU Example of Flawed Survey Checklist Questions

Let’s look at another example of poorly design checklist questions. This is from an employee survey at Boston University (BU). They had found that employees were leaving BU because of the commute to the school and the lack of good housing nearby. (By way of full disclosure I have my graduate degrees from BU and I taught there during my doctoral program.)

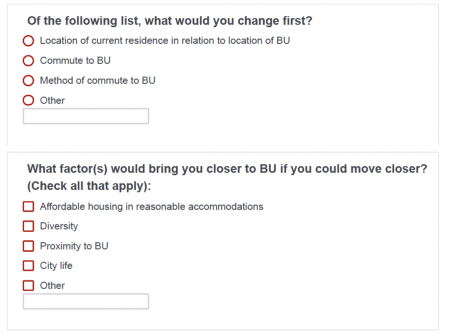

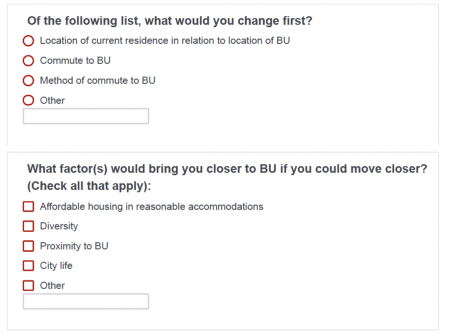

Poorly worded checklist question and checklist options

This first question is a wonderful example of overlapping response options. What’s the difference between:

- Commute to BU

- Method of commute to BU

Most times I can figure out what the survey design meant to write. Here I can’t.

Let’s look at the next question. Earlier I said that the wording of survey checklist questions is usually straightforward. Here it was not. It’s convoluted. What “would bring [me] closer to BU”? Moving closer would bring me closer. D’oh! How about: “What factors might entice you to move closer to BU?”

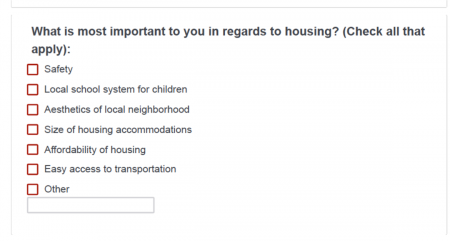

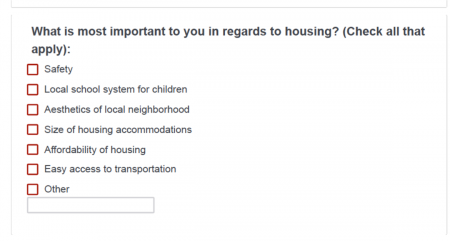

A flawed Importance question

Elsewhere I’ve written about ways to measure importance. Our third example above is a good example of how not to do it. The question states: “What is most important…?” But then the instructions say “Check all that apply.” Those are contradictory. Either have radio buttons and tell respondents to check the most important or have checkboxes and ask to check the two most important. If everything is important, nothing is important.

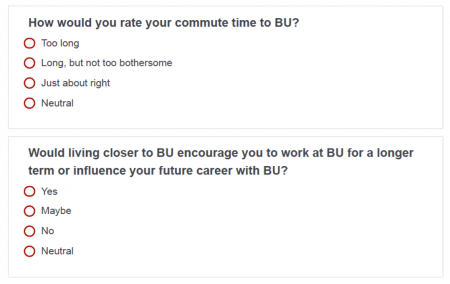

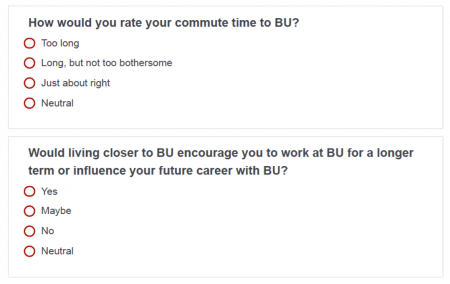

Is Neutral neutral here?

Finally, look at the response options for these last pair of questions. What does Neutral mean here? Neutral means “in the middle” or “not strong feelings in either direction.” How does that differ from “Long, but not too bothersome” and “Maybe”?

As the reader of the report, how would you interpret Neutral responses in the context of these questions? My guess is that many respondents chose Neutral when they “preferred not to answer”. Regardless, this survey question checklist option set garbles any idea for a course of action.

Yes, you’d think that they’d tap the research professors to design the survey, but they certainly didn’t. At least, I hope they didn’t.